Unlocking the True Logical Potential of AI

In the field of advanced Prompt Engineering, years of empirical practice have shown us that the exact way we ask AI to "think" drastically and objectively changes not only the style, but the logical quality and mathematical correctness of the final result. The absolutely most powerful technique, also studied by university researchers, is the so-called Chain of Thought (CoT). Instead of posing a problem and directly asking for an instant solution to a complex issue, we actively instruct the AI to expose, step by step and without rushing, all the intermediate links of its reasoning before issuing the final verdict.

This ingenious approach is directly inspired by models of human cognitive psychology, and in particular to the famous distinction between fast thinking (System 1, intuitive but prone to error) and slow thinking (System 2, painstakingly analytical but extremely precise). By forcing the AI to print on screen the logical steps it is taking, we force the token generator to keep track of the mathematical and logical context, reducing almost to zero the probabilities that the model makes "leaps in the dark" reaching incorrect conclusions, an otherwise very frequent phenomenon. Let's take a practical example. If you ask an AI to solve a complex corporate budget allocation problem, never just enter the data and ask for the final answer. The prompt must be structured like this: "Begin by carefully analyzing the premises, then identify the financial variables and bottlenecks, evaluate the pros and cons of three different investment strategies motivating them and, only at the end of all this process, propose the best possible allocation providing the partial calculations". Witnessing the breakdown of the reasoning not only leads to better results, but also offers you, as a human supervisor, an exceptional opportunity to review the logic and instantly identify where the machine might have taken a wrong path or made a trivial calculation error.

Parallel Evolution: Tree of Thoughts (ToT)

A dizzying evolution of this first technique is the Tree of Thoughts (ToT). In this more evolved and heavy mode, we ask the AI not to follow a single linear logical chain A-B-C, but to actively generate and test multiple reasoning branches simultaneously exploring various possible scenarios. Imagine, for example, having to strategically plan the launch of a new hardware product on a saturated market: using the Tree of Thoughts concept you can instruct the AI to simulate a real three-way conference between different and well-defined experts (a cynical finance expert, an aggressive viral marketing expert and a skeptical logistics manager). Each simulated "expert" develops and presents their initial idea for the launch. Having done this, in the next prompt, you ask the AI to force these simulated experts to critically dialogue with each other, forcing them to look for logical fallacies in the proposals of the other two to then discard the weak "branches of the tree" and make a single large superior plan survive and synthesize (Self-Refinement).

These advanced deconstructionist techniques definitively transform AI, taking it from being a mere document database (a sort of talking Google) to a formidable reasoning and predictive deduction engine. These methodologies are essential and particularly useful in delicate areas such as advanced software programming, where a single conceptual error in the initial architecture can cascade and vitiate the entire system compromising its final functioning, or in delegated administration or strategic planning, where the random variables at stake are far too many and too interconnected for a primitive linear algorithmic approach to have any useful effect. Arriving at mastering these refined strategies means, ultimately, knowing how to supervise and manage Artificial Intelligence no longer as a convenient but stupid typing tool, but rather as if one were presiding over a crowded and competent team of quarrelsome graduate consultants.

Practical Implementation of Cognitive Reasoning

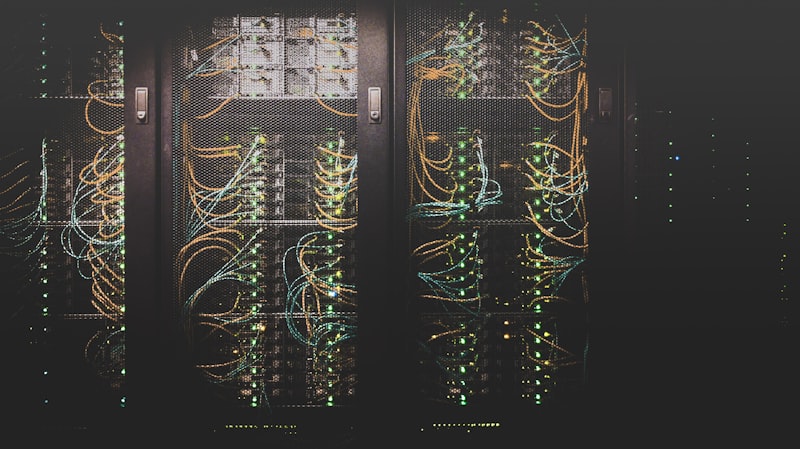

The structural integration of "Chain of Thought" techniques is rapidly evolving toward autonomous systems (Agents) where these reasoning chains are completely hidden from the end user. In modern Enterprise platforms, when a user asks a complex question, the system does not immediately return text. Instead, it triggers a background cluster of smaller models orchestrating a *Tree of Thoughts*: a "Planner" model breaks the task into 5 sub-tasks; three "Researcher" models verify calculations or web data; a "Critic" model evaluates the partial findings filtering out logic errors; and finally, a "Synthesizer" model assembles the perfect, polished, and verified final answer.

This multi-phase orchestration dramatically reduces algorithmic cognitive biases. By basically simulating a miniature board of directors, the models internally "argue" at lightning speeds before pitching a unanimous solution. This logical convergence is not an engineering luxury; it is the only known method to guarantee the flawless formal, mathematical, and legal accuracy of results in sectors totally intolerant to error, such as forensic financial auditing or complex predictive hospital operations.