What is RAG (Retrieval-Augmented Generation)?

One of the most unequivocal and significant structural limits of standard linguistic models is that their immense global knowledge "freezes" and stops exactly at the temporal moment in which their very expensive and gigantic initial training on Big Tech servers formally ends. From that moment of detachment onwards, an LLM is literally blind to the succession of real world events. Even more so, the AI is structurally unaware of the specificity of your daily corporate life. If you want an AI to be able to usefully answer meticulous questions related to your latest highly confidential corporate documents, be able to discuss the complex technical manuals of your proprietary products or can quickly orient itself on your changing internal HR procedures, well you can never rely on the general pre-synthesized and pre-supplied memory from the model builders. It would be completely illusory. And it is precisely to square exactly this essential cognitive and practical circle that the highly advanced RAG (Retrieval-Augmented Generation) architecture providentially takes the field.

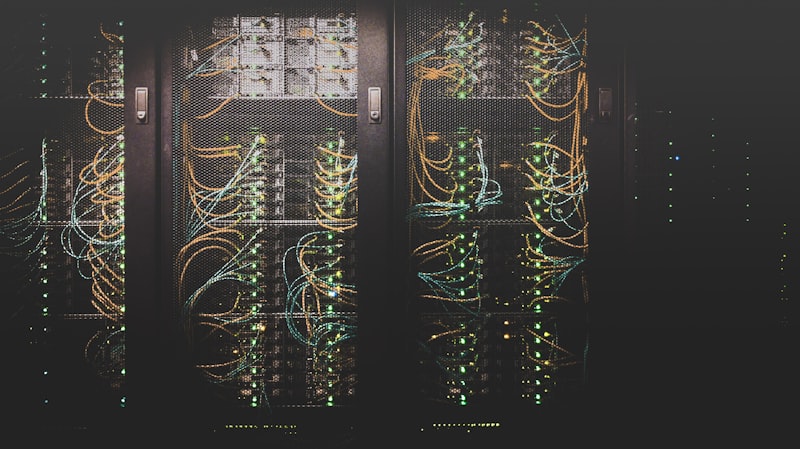

Vector Management and Continuous Ingestion

Once the miraculous potential of RAG (Retrieval-Augmented Generation) technology is embraced, the true corporate engineering challenge becomes not just its setup, but the dynamic maintenance of the "Database Truth" (Continuous Ingestion). When a company updates a legal contract by modifying a single paragraph amidst a sea of thousands of archived documents, the obsolete semantic vector must be invalidated and the new fragment recalculated in milliseconds. This metadata hygiene is vital: RAG remains invincible only as long as the text blocks it retrieves are unequivocally up-to-date and non-contradictory.

Equally decisive is the definition of the "Chunking Strategy", that is the delicate art of slicing the original native texts before vectorizing them. If we chop paragraphs into pieces that are too small (e.g., single sentences), the retrieval will be hyper-accurate but will fatally lack the overarching context the AI needs to reason. If we use chunks that are overly long (entire exhausting pages of manuals), we risk diluting the vital information amidst filler banalities. Tuning this "bite size" according to the typology of the corporate documentation is the true, hidden craft that distinguishes a top-tier RAG engineer from an amateur.