Generative Artificial Intelligence and the New Logic of Creation

Generative Artificial Intelligence is not just a more powerful version of the software we have used for the past thirty years; it is an epochal shift in how humans interact with machines. For decades, we have used computers as mere executors to process existing data following rigid rules. Today, for the first time, we use computers to generate content that previously did not exist: complex texts, photorealistic images, sounds, and functioning computer code.

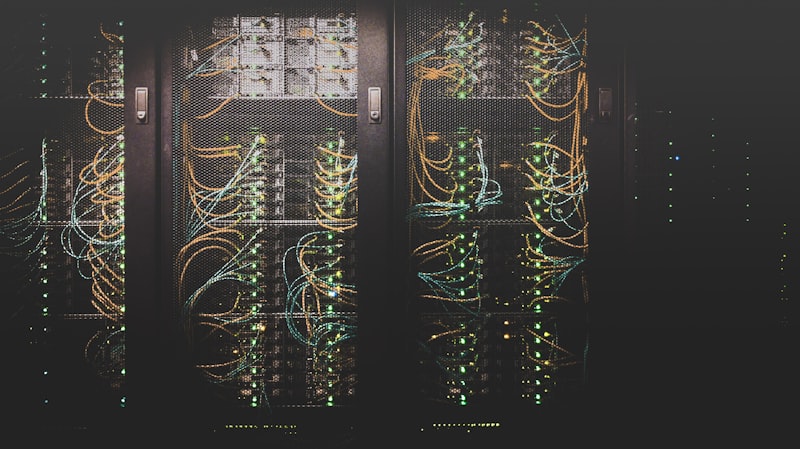

To understand this technology, we must look under the hood of language models, known as LLMs (Large Language Models). These systems do not "understand" reality like we do, they have no consciousness or sensory experience. They work through the calculation of probabilities on a monumental scale. When you write a question to an AI, the model transforms your words into tokens (narrable units of text) and searches its immense training database for which words are statistically most likely to follow yours. It is a sort of evolved autocomplete that, thanks to unprecedented computing power, manages to simulate human reasoning almost indistinguishably.

The Concept of Latent Space

The key concept to understanding the "creativity" of AI is that of "latent space". Imagine an infinite multidimensional map where every word, every concept, and every image has a precise coordinate. The AI learns the complex mathematical relationships between these coordinates. It knows that "king" and "queen" are close, but it also knows that the distance between "king" and "man" is the same as between "queen" and "woman". Moving in this vector space, the AI can create infinite combinations of concepts, allowing us to move from information retrieval to creative synthesis.

This technology is transforming every work and creative sector. In marketing, it allows customizing messages for thousands of different segments in seconds, maintaining brand consistency. In programming, it accelerates code writing by suggesting entire architectures and handling debugging. In everyday life, it serves as a universal and tireless tutor. However, the main challenge remains user awareness: AI is an incredibly cultured and fast assistant, but devoid of its own consciousness and innate ethics. Like a very powerful tool, it must be guided with precision, ethics and, above all, an unwavering critical spirit to validate every result it produces.

The Evolution of Foundation Models

The current generation of language models, often referred to as "Foundation Models", is based on the Transformer architecture introduced by Google in 2017. This architecture revolutionized natural language processing thanks to the attention mechanism (Self-Attention), which allows the model to dynamically weigh the importance of each word with respect to all the others in the sentence, regardless of their distance. This quantum leap made it possible to capture complex semantic nuances and long-range dependencies that previous neural networks (such as RNNs) could not handle.

The scalability of these models is another key factor. As researchers increased the number of parameters (the 'synaptic connections' of the network) and the size of the training dataset, "emergent capabilities" arose. Skills not explicitly programmed—such as translation, conceptual summarization, or coding—spontaneously manifested when the model reached a certain threshold of complexity. Understanding this dynamic is crucial to intuiting why the next generation of models promises to unlock analytical reasoning levels even closer to true human cognition.