Awareness and Risks of AI: Beyond the Myth of Infallibility

Using AI in a professional and strategic manner requires a profound awareness of its inherent limitations and the severe risks associated with information management. The best-known and most insidious problem is undoubtedly that of "hallucinations". Because AI generates text by extrapolating the statistical probability of words and not by consulting a database of verified objective truth, it can frequently happen that it completely invents historical facts, dates, bibliographic citations, or even complex regulatory and legal references, presenting them with a tone of absolute and reassuring certainty.

But why exactly does this happen? The AI is fundamentally designed to please the user and complete the assigned task at all costs. If you ask it to summarize a scientific article or a literary source that does not actually exist, the model might easily "create" a plausible author, title, and abstract just to comprehensively answer your request and not admit its own ignorance. This behavior makes final human oversight strictly mandatory (the so-called human-in-the-loop model). The golden rule is never to publish or use sensitive, medical, accounting, or legal data generated by AI without first performing a careful and independent manual verification by a qualified professional in the field.

Corporate Data Privacy and Leak Risks

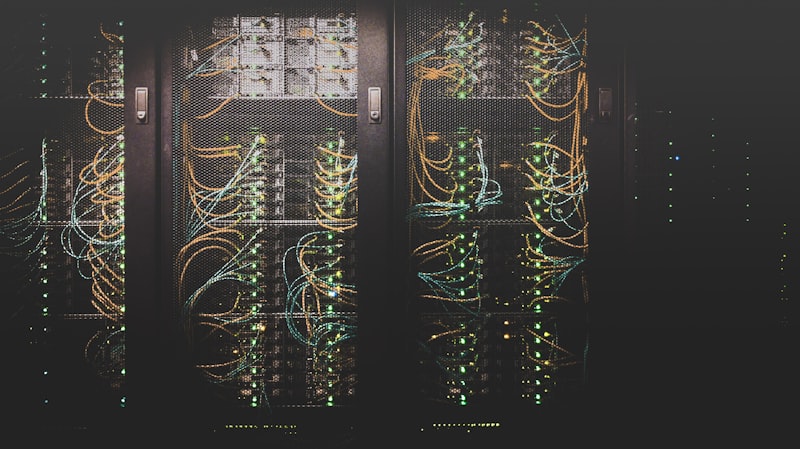

Another vast critical theme is data privacy and security. Every single time you input information into a free public AI model (like the base version of ChatGPT), that data could theoretically be stored and used to retrain and refine future versions of the software. In practical terms, this means that if you include your secret software source code, your commercial strategy for the next year, or, even worse, the sensitive personal data of your client in your prompts, there is a concrete risk that this information could randomly resurface months later in a response given by the model to an unknown external user or an industry competitor. For professional use, the categorical imperative is: never write anything in a public AI chat that you wouldn't be willing to print on a giant billboard. For sensitive data, it is mandatory to rely only on paid "Enterprise" versions that guarantee contractual opt-out from training, or on Open Source models run in local isolation.

Historical Bias and Prejudice in Training Data

Finally, and no less important, we must consider the phenomenon of algorithmic bias or prejudice. AI is not an immaculate mirror of reality, but learns by training on millions of internet pages written by human beings. And the internet, as we know, contains and emphasizes historical social, racial, gender, and cultural prejudices. All this enormous baggage is inexorably reflected in the results that the AI returns to us. If, for example, you ask an image generator to create a portrait of a "successful CEO" or a "surgeon", the model might overwhelmingly propose figures of middle-aged white men; conversely, for "nurse" or "assistant" it will tend to generate female figures. Being conscious and ethical users means striving to actively recognize these ingrained patterns and learning to mitigate the problem by architecting inclusive prompts that actively promote diversity and neutrality of information, with the primary goal of avoiding the perpetuation and amplification of harmful ancient social stereotypes in our modern digital communications.

Data Governance and Hallucination Mitigation Strategies

At the Enterprise level, mitigating statistical hallucinations relies not only on prompt engineering or blind trust in employees, but on strict "Grounding" architectures (anchoring to facts). Instead of allowing the model to rely on its own limited and fallible internal memory, it is heavily constrained to generate responses based exclusively and categorically on text extracted in real-time from certified external sources (corporate search engines, policy databases, or verified clinical archives).

Simultaneously, enterprise security mandates the adoption of rigorous Input/Output filters (Guardrails). These are bridging software modules operating between the user and the language model. A guardrail parses the human agent's prompt in real-time, blocking requests that are illegal, malicious, or aim at stealing intellectual property. Concurrently, it preemptively scans the output generated by the AI before displaying it on screen, screening out or redacting any leakage of personally identifiable information (PII) or ethically misaligned responses. Professional AI is never "left alone".